When you’re optimizing your website or blog, do it with intent and strategy. The first step to finding what to change within your site or email strategy is to gather research and insights. And the best way to do that is to use A/B testing that’s designed like a scientific experiment.

An A/B or A/B/n test is simply a controlled experiment. This process is the foundation of scientific discovery. How do you know what determines the period of a pendulum? Well, let’s test each variable: mass, string length, and initial angle. But we have to do it one at a time so that we know which one actually affects the period.

Before you go running for the hills from all this physics talk, let’s use this concept to generate useful metrics against which you can determine your ROI.

Design an Experiment

Make sure to put in the initial effort to create a test that will give you the most informative and efficient results. Take some time to design your test according to the scientific method:

- State a problem

- Propose a hypothesis

- Test and perform an experiment

- Analyze results

- Repeat

First things first, be clear on the problem you want to evaluate. Are you wondering about the kind of traffic that visits your blog? Do you want to find the best design for your brand? Are you trying to bring in a certain kind of persona through your newsletter? Explicitly state what it is that you want to improve and in what channel.

Next, specify the factors you wish to examine. Are you looking to reduce the bounce rate of your website? Do you want to improve the CTR in your newsletter? Are you looking for the best placement of a CTA on your blog? Remember: just pick one.

At this point, you might be saying, but friend, there are so many factors I want to test! I want to optimize the design and the content of my website. What about the images used and the timing of my social posts? I have so many ideas for the placement and phrasing of my CTAs. Why do I have to choose just one factor at a time?

There are strategies for testing many factors, but to get the biggest impact on your optimization initiative, change one thing and one thing only—at least per test.

The key to isolating the factor with the largest influence on your site is what in science is called a “control.” The idea of a control is to change a single variable and keep all else the same.

With the pendulum experiment, you might change the mass hanging from the string, but each time you do, the string length and the initial angle stay the same. The latter two are the controls, making sure that all you’re testing is the mass. That way you’ll know that if the period changed significantly, then the mass has something to do with it. (Spoilers: the period relies only on the length of the string, not the mass or the initial angle. Physics, right!?)

In digital marketing, you can do the same kind of thing. You might write emails that have the same content but different subject lines to test what entices readers to open the email. In a blog post, you might again keep the content consistent while you change the placement or the verbiage of a CTA. You might play with the amount of negative space on your website, keeping all the content the same.

Noticing a trend? For each of these examples, the content was our control. Really, all you’re testing is how to grab the attention of a reader. We know you’re already putting in the effort to produce the best content for your customers. The point of this testing is to make sure all that effort isn’t wasted.

Who Ya Gonna Ask?

So, you’ve come up with a brilliant plan for testing one factor keeping all other factors as the control. Excellent. But all that planning will go to waste if you don’t consider who’s going to see it. An important element of designing an experiment is appropriate sampling.

There are three aspects to consider when putting together your sampling group:

1. Randomization

To ensure that what you’re testing is true in all cases, you have to apply your test to a randomized group of individuals. The point of this is to eliminate—or at least reduce—any biases.

To accomplish this, assign each treatment by some random process. Most A/B testing technology already has this feature built-in (if they don’t, go with someone else). But it’s important that you understand why randomization is important.

Think of this following example:

Your website has been performing decently for a while now, but you want to see if it can be even better. You decide to change the layout slightly. You assign the control to be the current design of your website and the variable to be a new color scheme. You know sampling is critical, so you think to get a wide variety of people by displaying the website the new way for half the day and return to the control for the second half of the day.

Good job, right? Well…

In this example, you may have inadvertently been targeting a certain bias. Maybe people who go online in the morning are naturally more attracted to the blue of your new design, and the afternoon people have always loved your orange. Your results would show that there was no change between the two models, but what really happened is that you were sending out examples that appealed to diverse groups that already had a bias. This is the effect of confounding results. Your A and B options were given to two different groups, but the results signaled the difference in the groups, not the difference in your options. Not what you were looking to discover.

A better way might have been to use a randomizer that would display one of your two website options throughout the day every time someone visited the site. The data you collected would then be statistically significant because the inherent biases would have been significantly reduced by your sampling methods.

2. Replication

If you ask one person what they think of your new site, that would be a very poor sample size, obviously. Even asking 25 people might be too small a size to get significant results.

In any experiment, there is an element of what is called “error.” This word in science does not have the meaning as it does in the rest of the world as “mistake.” Rather, it means that the data we collect will always have a bit of uncertainty around it. This is perfectly alright and natural part of conducting science. But we do have to account for it.

One way of reducing the uncertainty in an experiment is to increase the sample size. To fully explain why would be to drag you through a crash course in error analysis, which I won’t do (unless of course, you want to talk about it, because it’s awesome!). Suffice it to say that the more times you sample, the less significant the uncertainty in any single sample. Thus, overall, your uncertainty is lower. In the pendulum experiment from earlier, we might measure a single period, which would have a certain value of uncertainty. But if we were to measure 20 periods and divide by 20 to get a single period, the uncertainty would also be reduced by dividing by 20.

The same idea maps across to digital sampling. The more times you repeat a test, the less likely you are to be picking up on any kind of uncertainty in the data. You’ll also further reduce any inherent bias if you ask more people. There’s more of a chance of true randomness and more significant results.

3. Blocking

Blocking is a means of accounting for certain biases that may be found in any group to still maintain randomness in your sampling. The technique is to first divided up your groups into homogeneous brackets and then to conduct a randomized test within each group. That way, you can see trends within each group.

In the social sciences, this usually translates to age or gender, but in digital B2B marketing, you can apply blocking methods to your targeted personas.

If you’ve set up your gated content and subscription page with the appropriate questions, you probably have the persona information for each email address tagged in your database. With that information, you can conduct A/B tests within each group to see which subject line appeals to that persona over others.

Blocking is a way to gather even more information about a single variable.

All the Right Controls in All the Right Places

Once you have designed a robust experiment with controls in place and sampling accounted, put it out there to be tested—but for how long?

This is less of a one-answer-fits-all question. Websites may need more than a day to gather adequate data, but emails don’t last long in anyone’s mailbox (well, they do in mine, but that has nothing to do with the subject line verbiage). Knowing how long to leave a test up is a bit of an experiment in itself. Play around with it until you feel confident in your data.

After conducting your experiment, gather your data to see what the test has revealed. Keep an open mind at this point so that you don’t introduce your own bias into the mix. And be critical of what you find. Careful analysis is the final piece to the puzzle of a successful experiment.

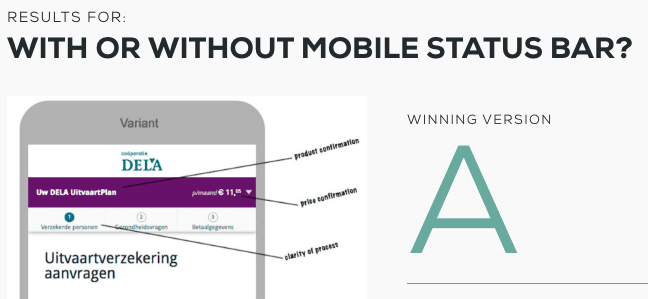

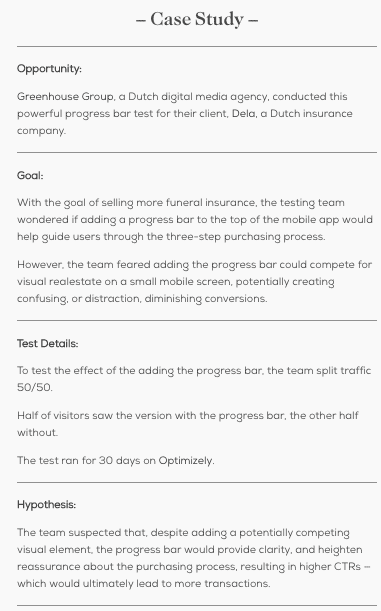

If you want more examples on how to get amazing results from A/B testing, Behave is a great resource for understanding how to design and analyze A/B tests for your site.

They conduct monthly tests and share them with subscribers, delineating the whole process of how they got there, from the statement of the problem to hypothesis to results and analysis.

Multi-Factor Designs

After your analysis, you may find that you want to tweak something else. At this point, feel free to change more factors for subsequent tests.

Multi-factor experiments do not directly follow the scientific method, but they are useful for small revisions of a design. Your initial experiment that had the simpler A/B structure should have narrowed down the big changes that your channel required.

Now you can implement A/B/n testing which will reveal data about more factors and help you understand the relationship between elements in the channel. A multi-factor design is useful for refining the optimization of a channel.

Final Thoughts

The most important takeaways:

- Keep controls in your test to isolate a single factor

- Create a large, random sampling that reduces the biases of the test

- Play with the timing of an experiment

- Carefully analyze the results and reduce your own bias

- Repeat and refine experiments, incorporating multi-factor strategies

Now go out there and start making your website/emails/blog/etc. the best it can be!